How AI Turns Security Camera Footage into Real-Time Threat Detection at Enterprise Scale

Walk into any enterprise GSOC and you will see the same paradox. Wall-to-wall monitors. Thousands of feeds. Petabytes of footage being recorded around the clock. And almost none of it being watched.

That is not an indictment of the operators in those rooms. It is the inevitable result of an industry that has spent twenty years optimizing the wrong problem, i.e. capturing more video, at higher resolution, for longer retention windows, without solving for what comes next: actually understanding what the cameras are seeing, in real time, across an enterprise footprint.

Less than 1% of all surveillance video is ever watched live. The remaining 99% only delivers value when something has already gone wrong, when an investigator goes looking for what the cameras quietly captured before, during, and after the incident.

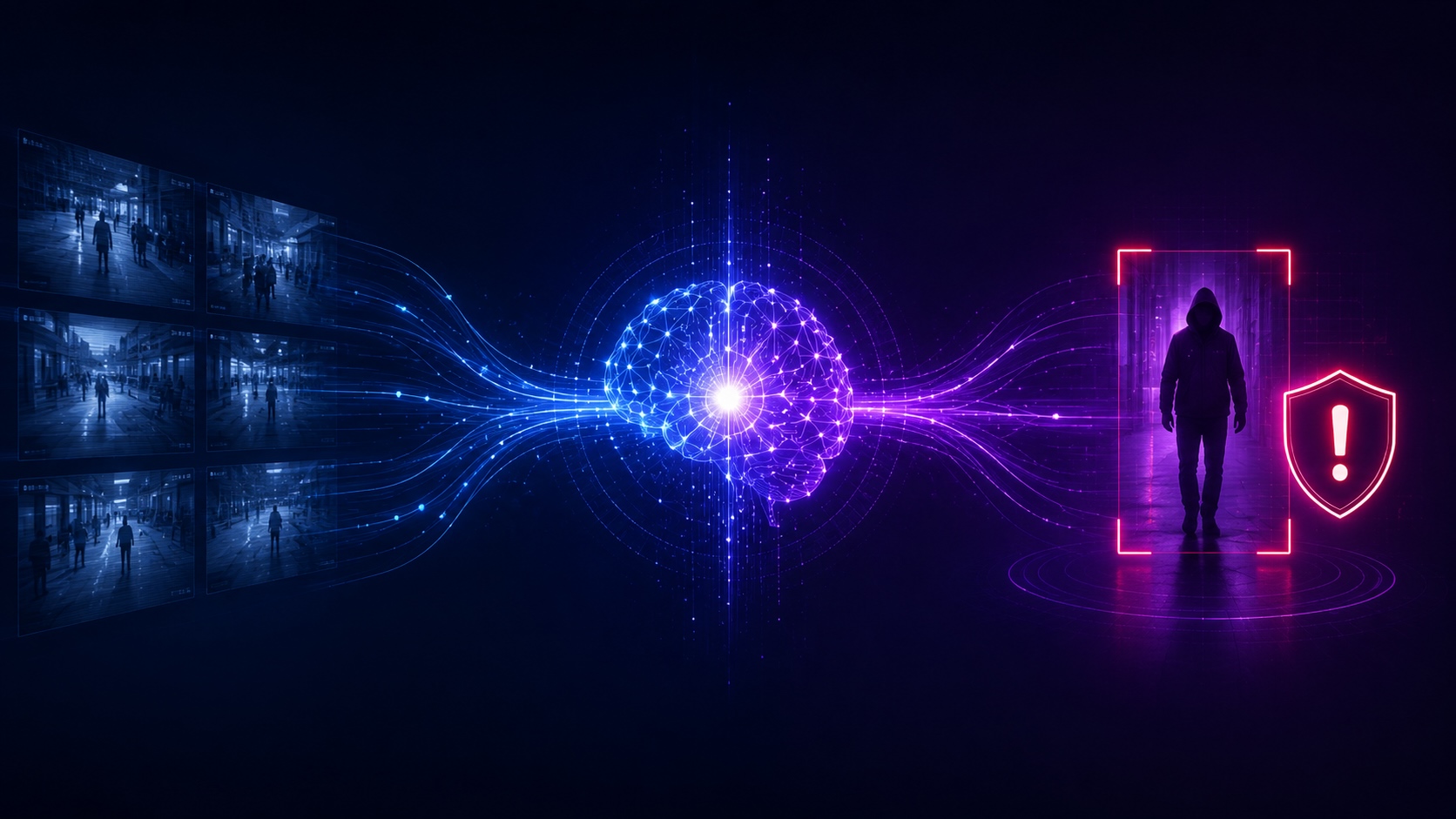

That gap between footage captured and footage understood is where modern AI is rewriting the rules. Not by replacing the cameras. Not by replacing the operators. But by giving the cameras and the people running them something they have never had: continuous, contextual comprehension of every frame, on every feed, all at once.

Key Takeaways

- AI converts security camera footage from passive recordings into real-time threat detection by applying continuous contextual reasoning across every feed at the same time.

- Reasoning Vision-Language Models distinguish genuine threats from routine activity by interpreting behavior, environment, and intent, not by matching pixels against static rules.

- Intelligent monitoring extends operator capability across every camera without expanding headcount, shifting the role from watching feeds to acting on validated events.

- Continuous metadata generation makes both live and historical security camera footage searchable in seconds, transforming investigations that used to take days into questions answered in conversation.

Enterprise Video Surveillance Is Generating More Footage Than Any Team Can Absorb

The math has been getting worse for a decade.

Camera counts climb. Resolutions climb. Retention windows climb. Storage spend is climbing right alongside them, and shows no sign of slowing. Every new facility, every new threat vector, every new compliance requirement adds more cameras to the network and more hours of footage to the archive.

The human side of the equation has not kept up. It cannot. A single GSOC operator may now be responsible for hundreds of feeds spanning multiple sites, time zones, and threat profiles. The teams behind those operators are notoriously hard to keep intact: more than 40% of security service providers say turnover is their single biggest operational challenge. Institutional knowledge walks out the door on a quarterly basis.

And even when seats are filled and operators are well-trained, biology pushes back. Research has shown that after roughly twenty minutes of continuous monitoring on a single screen, up to 90% of activity goes undetected. That is not a training issue. It is not a motivation issue. It is the cognitive ceiling of human attention, applied to a surveillance load it was never built to carry.

This is the systemic reality that intelligent monitoring is built to address. Though now a cliche, it bears repeating - It is not a people problem. It is a systems problem.

Why Rule-Based Detection Falls Short of Making Security Camera Footage Actionable

For most of the last fifteen years, the industry's answer to this gap has been rule-based analytics. Motion triggers. Virtual tripwires. Line-crossing rules. Basic object classification. Build the right rules, the thinking went, and the cameras can flag what matters automatically.

In practice, that approach has produced a different outcome: an alert flood that overwhelms the very operators it was meant to help.

A perimeter line-crossing rule fires for a deer, an authorized employee taking a shortcut, and an actual intruder with the same urgency. A motion trigger activates for wind-blown debris and for a person climbing a fence. An access exception fires for a held-open door at a loading dock and for a forced entry at a server room. None of those signals carries the context to tell an operator which one matters.

The result is a false alarm rate above 98% at most enterprise SOCs. Operators spend the bulk of their shifts sorting noise from signal. Alert fatigue accumulates. Genuine threats get queued behind dozens of false ones, and the very tools meant to extend operator capacity end up consuming it.

A monitoring approach that produces 98 false alarms for every two real ones is not a security system. It is a screening problem masquerading as one.

Contextual Analysis Turns Security Camera Footage into Surveillance Intelligence

What makes security camera footage genuinely actionable is contextual understanding, i.e. interpreting the scene itself, not just the objects inside it.

Context means analyzing relationships, not just detections. The relationship between a person and a place. Between an action and a time of day. Between a specific behavior and what is normal for a given zone. An employee carrying a toolbox through a warehouse during a scheduled shift is routine. An unrecognized individual carrying the same equipment near a server room at 2 a.m. is something else entirely. A person lingering at an emergency exit at noon during shift change is one signal. The same person, same exit, same posture at 2 a.m. on a Sunday is another.

This distinction matters because enterprise environments are complex. What is normal varies by site, by zone, by hour, by day of week. Effective security footage analysis is not pattern matching. It is pattern interpretation, anchored to the specific context of the place being protected.

The technology to do this has gone through three architectural generations:

- Convolutional neural networks that analyzed spatial features in individual frames and is capable of identifying objects, but blind to time, sequence, and context.

- Temporal deep learning models that introduced sequence understanding but remained constrained to predefined activity categories. Better at recognizing "a person running", but still unable to reason about whether the running mattered.

- Reasoning Vision-Language Models that fuse visual perception with language to interpret scenes, behaviors, and intent across long-range temporal sequences. And to express that interpretation in terms a security operator can act on.

Reasoning VLMs do not just see motion. They understand sequences, cause-and-effect, and behavioral evolution. They can hold context across minutes of video, reason about what they are observing, and surface validated events where behavior, environment, and intent all point to something that genuinely warrants attention.

The practical outcome is a step change in alert quality. Instead of every line crossing and every motion event landing in the queue, operators receive a smaller stream of context-rich events, each one already triaged by the system before it ever reaches a human screen.

How AI Video Surveillance Extends Operator Capability Across Every Camera

The operational value of AI video surveillance is best measured in operator capability, not camera count.

Consider the architecture of a traditional GSOC. Priority feeds rotate across video walls. Alarms route in from PACS, intrusion panels, and point analytics. Operators try to maintain situational awareness across facilities that may span multiple cities, countries, or continents. Coverage is necessarily selective. Whatever is on the wall at any given moment is being watched. Whatever is not is, in effect, being recorded for later.

Intelligent monitoring inverts that model. Every camera becomes an active intelligence source rather than a passive recorder. Instead of asking an operator to watch each feed, the system continuously analyzes all feeds and surfaces only the moments that warrant attention, with behavioral context already attached. The operator's role shifts from scanning for anomalies to reviewing validated events and making informed response decisions.

That shift has three operational consequences worth naming clearly:

- Coverage becomes continuous. Every camera contributes to real-time situational awareness, not just the handful displayed on a video wall at any given moment.

- Alert quality improves. Operators receive events filtered through contextual reasoning, with enough information attached to support fast, confident decisions.

- Scale decouples from headcount. Adding cameras no longer adds proportional load to the human team.

The operator stays central. Judgment, ethical reasoning, and response leadership remain uniquely human strengths. What changes is what reaches them: validated events instead of raw noise. The human stays in the loop; they just stop being the bottleneck.

Making Enterprise Security Camera Footage Searchable and Continuously Analyzed

Real-time monitoring is half the picture. The other half is everything that has already been recorded.

Raw video is unstructured by default. Without metadata, an investigation means scrubbing through hours of footage across dozens of cameras, hoping to find the right person, the right object, or the right moment in time. Investigations that should take minutes routinely take days. The footage exists; the answers do not, until someone goes looking for them frame by frame.

Modern AI architectures address this by generating structured metadata continuously, as video is captured. Every frame is indexed with information about people, objects, activities, and behavioral context. That index transforms raw footage into a searchable intelligence layer. A query like "person in a red jacket near the loading dock between 2 and 4 p.m." can return results across thousands of cameras in seconds, expressed in natural language rather than camera IDs and timestamps.

Delivering that capability at enterprise scale is as much an architectural problem as an AI problem. It requires distributed processing that runs analytics close to the video source, continuous metadata generation pipelines, and a system designed to support both live alerting and rapid forensic search against years of archived footage. The detection models matter. The architecture that lets those models run continuously across thousands of feeds, at the speed security operations actually demand, matters just as much.

The shift from passive recording to active intelligence is, fundamentally, a shift in what video is for. Not evidence collected for later review. A live, queryable understanding of the physical environment, available the moment a question is asked.

The Industry's Path Forward: From Surveillance to Agentic Physical Security

The trajectory across all of the above is consistent. Static rules give way to contextual reasoning. Selective coverage gives way to continuous awareness. Reactive review gives way to proactive prevention. The terminal point of that trajectory has a name: Agentic Physical Security.

Agentic Physical Security is defined by a continuous loop — see → think → assess → act — in which AI continuously perceives the physical environment, understands what it is seeing, assesses risk in context, and initiates appropriate action at machine speed. It is autonomy with the human still in the loop, but no longer in the bottleneck. It is the architectural endpoint of the journey from "cameras that record" to "cameras that understand."

This is the category Ambient.ai created and leads. The Ambient platform is powered by Ambient Pulsar, the first edge-optimized reasoning Vision-Language Model purpose-built for physical security, and is designed to work with the cameras, sensors, and access control systems enterprises already have in place. It continuously identifies and assesses 150+ verified threat signatures in real time, eliminates over 90% of false alarms, and helps resolve more than 90% of alerts in under one minute. Ambient Advanced Forensics makes every recorded frame searchable in seconds.

The result, for security leaders, is a physical security program that finally matches the scale of the infrastructure it is responsible for protecting, and that closes the gap between footage captured and footage understood.

.avif)

.avif)